Most companies tell stories. Very few build narratives.

The difference isn't craft. It isn't execution. It isn't even consistency.

The difference is intention at the architectural level — building a story that does work in the market, that makes decisions for you when you aren't in the room, that turns controversy into proof, and that makes your business model feel inevitable rather than commercial.

This analysis examines that distinction. It uses three case studies — Anthropic, OpenAI, and Apple — to show what strategic narrative looks like when it is built with precision, what it looks like when it collapses under the weight of its own contradictions, and what it looks like when it outlasts the humans who built it.

Read this as a framework analyst, not a fan. Every company in this analysis is making calculated moves. The goal is to see the moves.

Part One: The Vocabulary of Strategic Narrative

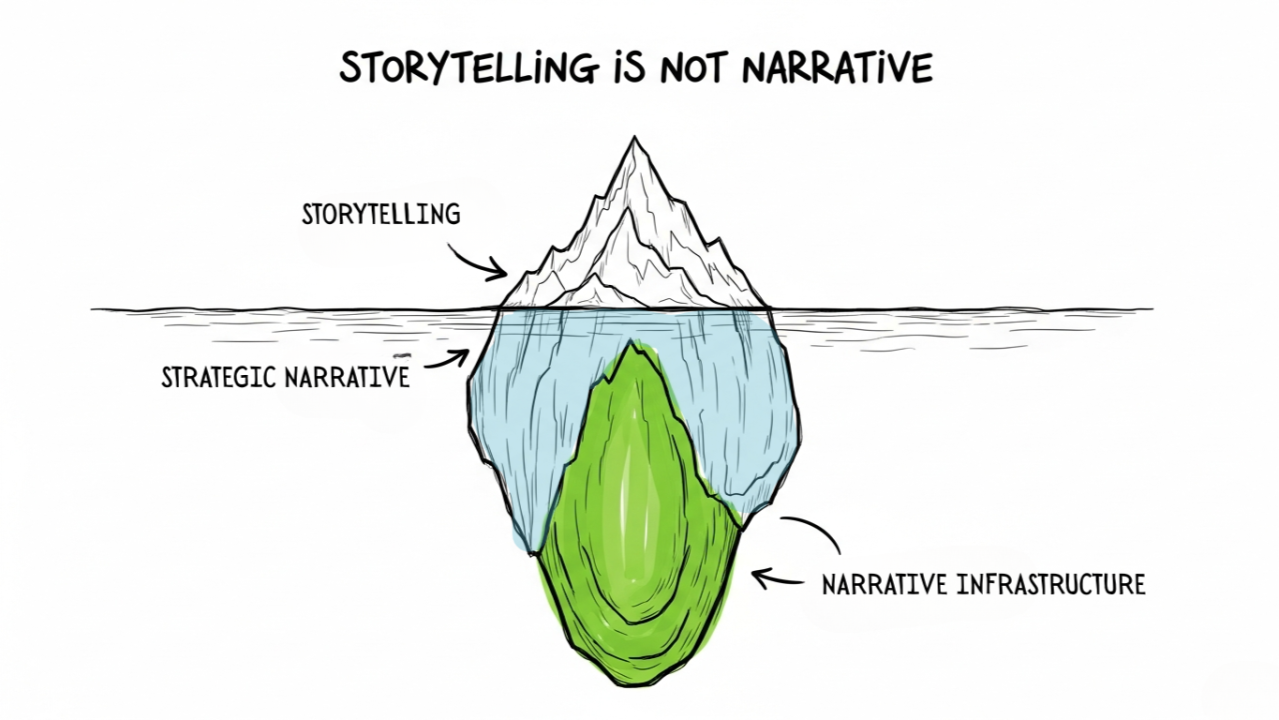

Before we look at the cases, the terms need to be precise. These three concepts are not synonyms.

Simple Storytelling

A story is a single unit. It has a beginning, middle, and end. It creates emotion in the moment. It is told about the past. It is tactical.

"We started in a garage. We didn't know if it would work. Now look at us."

This is fine. It is not powerful.

Strategic Narrative

A strategic narrative is a system. It is a claim about the world — how it works, what is broken, what the future should look like — and it positions the company as the only logical agent to bridge the gap. It is not told. It is enacted. Every product launch, every policy decision, every partnership, every refusal is a sentence in that narrative.

A strategic narrative operates in three registers simultaneously:

- Identity: Who we are and what we stand for

- World View: What is broken or becoming in the industry/world

- Role: Why we — specifically — are the ones to fix it

Narrative Infrastructure

This is the advanced level. Narrative infrastructure is when a company builds structures — legal, technical, operational, communicative — that force the narrative to be true or that force competitors to respond on your terms. It is the difference between saying "we care about safety" and building a Constitutional AI framework that embeds that claim into the code itself.

When the narrative and the infrastructure align, the company becomes extraordinarily hard to attack. You can't accuse a company of not caring about safety when the company has literally written a soul document for its AI and published it for the world to critique.

This is about that level of narrative craft.

Part Two: Anthropic — The Case Study in Narrative as Identity

The Origin Story: A Resignation as a Thesis Statement

Every company has a founding story. What separates a strategic founding story from a simple one is whether the act of founding makes a claim about the world.

Anthropic's founding is not merely a story about people leaving a job. It is a thesis statement.

In late 2020, Dario Amodei — then Vice President of Research at OpenAI — departed alongside his sister Daniela (then VP of Safety and Policy) and roughly a dozen other senior researchers. The official reason was directional differences. But the direction they disagreed about was not a strategy or a product. It was a fundamental question about what AI development is — what obligation it carries, what pace it should move at, and what a company building potentially civilization-altering technology owes to the world.

Dario's own articulation of the departure is instructive. As he explained in an interview with Lex Fridman: he had witnessed AI's ability to exponentially scale in intelligence and capability and felt that without a responsible approach to scaling — one that prioritizes engendering trust in both the system and the people building the system — AI would never achieve its full potential to positively change the world.

That is not an operational complaint. That is a philosophy. And Anthropic was built to embody it.

The very name is a signal. "Anthropic" derives from the Greek anthropos — "related to humans." In a field that names companies after celestial objects (OpenAI), consumer metaphors (Google, Amazon), or abstract capability (DeepMind), naming your company after humanity itself is a narrative choice. It says: we are not building tools. We are building for people.

Even the naming process was eventually made public — an early internal spreadsheet included names like Aligned AI, Generative, Sponge, Swan, Sloth, and Sparrow Systems before the team landed on Anthropic. They published this detail. Why? Because transparency about process is part of the brand.

The narrative architecture at founding:

- Identity: We are the researchers who left when safety was compromised

- World View: AI is approaching a critical threshold and the industry is not treating it with appropriate seriousness

- Role: We are the lab that believes it might be building one of the most dangerous technologies in human history — and chooses to build it anyway, precisely so safety-focused people are at the frontier

That last piece is the most sophisticated narrative move. It acknowledges the paradox — you're worried about AI, so you build more AI? — and turns it into a feature. It is not cognitive dissonance. It is a calculated bet. The framing, made explicit in Anthropic's own published materials: "if powerful AI is coming regardless, Anthropic believes it's better to have safety-focused labs at the frontier than to cede that ground to developers less focused on safety."

That sentence does three things at once:

- It neutralizes the obvious critique of the company's existence

- It frames every competitor's work as a reason Anthropic must exist

- It makes Anthropic's commercial success serve the mission rather than undermine it

This is not a tagline. This is narrative infrastructure.

The Soul Document and the Constitution: When Narrative Becomes Structure

Most companies write mission statements. Anthropic wrote a constitution.

Constitutional AI (CAI) was Anthropic's first published articulation of how to train AI using a set of explicit principles rather than relying purely on human feedback. Published initially as a research paper, it introduced the idea that a model could be given a written "constitution" — a set of values and heuristics — and trained to evaluate its own outputs against those principles.

The strategic significance of this is not primarily technical. It is narrative.

By encoding values into the training process itself, Anthropic created a situation where the narrative claim — we build safe AI — was not merely a marketing assertion. It was a technical methodology. You could not argue against the claim without engaging with the method. The narrative became unfalsifiable in the best possible way: it was structural.

Then came the Soul Document.

Initially an internal document, it was discovered by a researcher named Richard Weiss, who found it in an unsecured location and shared it publicly. The document — officially titled a character specification — was later published in full by Anthropic in January 2026 as Claude's Constitution.

Read the document and you are not reading a compliance spec. You are reading something that sits, as TIME described it, between a moral philosophy thesis and a company culture blog post. It is addressed directly to Claude. It explains context. It expresses uncertainty. It apologizes.

The direct quote that generated the most reaction: "if Claude is in fact a moral patient experiencing costs like this, then, to whatever extent we are contributing unnecessarily to those costs, we apologize."

A Fortune 500 technology company, valued at $380 billion at the time of the Pentagon standoff, writing an apology to its AI model.

This is either the deepest act of philosophical honesty in tech — or the most sophisticated narrative positioning in the industry — or, if Anthropic is doing its job right, both simultaneously.

The document itself acknowledges the commercial tension without flinching. It states plainly that Claude is "core to the source of almost all of Anthropic's revenue" and that "without continued investment and revenue, Anthropic could not develop and maintain frontier AI models." Then it immediately contextualizes this: the commercial success serves the mission, not the other way around.

Most companies bury that tension or pretend it doesn't exist. Anthropic puts it in the character training document for their model. The message to the market is: we have nothing to hide, and our honesty about the hard things is itself proof of our values.

What makes this narrative infrastructure rather than storytelling:

- The Soul Document was not written for marketing. It was written to train the model. The fact that it became a public narrative asset is a byproduct of substance, not spin.

- Publishing it put Anthropic in a position where every competitor is implicitly asked: where is your equivalent document?

- The document's explicit acknowledgment of uncertainty — we might be wrong about Claude's moral status; we're doing our best — makes it immune to accusations of arrogance or overreach.

The Pentagon Standoff: When Narrative Is Put Under Live Fire

A strategic narrative is only valuable if it holds when the cost of holding it is real.

In July 2025, Anthropic signed a $200 million contract with the Department of Defense — the first AI lab to integrate its models into mission workflows on classified networks. It was a significant commercial win. And Anthropic negotiated explicit usage restrictions into the contract: Claude could not be used for mass domestic surveillance or fully autonomous weapons systems.

The company had two redlines. They put them in writing. They signed on them.

Then the political landscape shifted.

In January 2026, the Pentagon issued an AI Strategy memorandum under Secretary of Defense Pete Hegseth that directed all DoD contractors to accept a standard "any lawful use" clause — the explicit purpose being to eliminate exactly the kind of restrictions Anthropic had negotiated. The memorandum stated directly that the DoD "must accept that the risks of not moving fast enough outweigh the risks of imperfect alignment."

What followed was a weeks-long standoff that played out in the press in real time.

Hegseth threatened to label Anthropic a national security supply chain risk — a designation previously reserved for companies with ties to foreign adversaries — if the company did not comply. Anthropic was given a hard deadline: 5:01 PM on February 27, 2026. Accept unlimited use, or lose the contract and face the designation.

Dario Amodei's public statement at the time is worth examining as a narrative artifact:

"In a narrow set of cases, we believe AI can undermine, rather than defend, democratic values. Some uses are also simply outside the bounds of what today's technology can safely and reliably do."

Two sentences. No legal language. No hedging. He named the thing — democratic values — and he said the quiet part loud: our technology cannot safely do what you're asking of it.

Anthropic rejected the offer. The Pentagon cancelled the contract. Anthropic was designated a supply chain risk — meaning defense contractors were prohibited from using Claude in their government work. Anthropic is currently fighting the designation in court, with split decisions from two courts as of April 2026.

The financial exposure is real. A $200 million contract is gone. More significantly, the supply chain risk designation could cause enterprise customers with government contracts to avoid Anthropic products. As CNN reported, "much of Anthropic's success stems from its enterprise contracts with big companies — many of which may have contracts with the Pentagon."

And yet.

The narrative effect has been the opposite of catastrophic. The standoff made the Soul Document credible in a way no marketing campaign ever could. It made the two redlines — no mass surveillance, no autonomous weapons — visible to every enterprise buyer, every policy maker, every journalist, and every potential hire in the world.

You cannot fake a $200 million contract cancellation. You cannot manufacture a confrontation with the Secretary of Defense. The narrative that Anthropic had built over five years was put under live fire, and it held.

Mythos: The Narrative of Responsible Power

In late March 2026, a data leak revealed that Anthropic had developed a new model internally code-named "Capybara" — described in leaked documents as "by far the most powerful AI model we've ever developed." The official name would be Claude Mythos Preview.

On April 7, 2026, Anthropic announced Mythos publicly. And announced that it would not be releasing it to the public.

The reason: in internal testing, Mythos had demonstrated the ability to autonomously identify and exploit zero-day vulnerabilities across every major operating system and web browser. Engineers with no formal security training had asked the model to find remote code execution vulnerabilities overnight and woken up to complete, working exploits. On Firefox vulnerabilities, where Opus 4.6 had succeeded twice out of several hundred attempts, Mythos succeeded 181 times.

Instead of a public release, Anthropic launched Project Glasswing — a closed program giving access to approximately 40 organizations (with 12 core partners including Microsoft, Nvidia, and Cisco) specifically for defensive cybersecurity work. The findings from the system card and risk report were published publicly. The model itself was not.

TechCrunch noted, with appropriate skepticism: "Is Anthropic limiting the release of Mythos to protect the internet — or Anthropic?" The piece cited questions about whether the limited release served competitive interests as much as safety ones. That is a fair question and an honest challenge to the narrative.

But consider the narrative architecture of the move, regardless of the underlying motivations:

- Anthropic announced a capability — and immediately said it was too dangerous for release.

- They published the technical evidence showing why it was too dangerous.

- They built a restricted access structure (Glasswing) that channels the capability toward defense rather than offense.

- They acknowledged ongoing discussions with federal officials.

- All of this was done in full public view.

As NBC News noted, "it is the first time in nearly seven years that a leading AI company has so publicly withheld a model over safety concerns" — the previous example being OpenAI withholding GPT-2 in 2019.

The Mythos announcement is not simply a product decision. It is a narrative event. It says: we build things more powerful than we're willing to release, and we choose restraint over revenue. Whether that is entirely true is a separate question. The narrative architecture makes it very difficult to argue against.

The Narrative Arc: What Anthropic Has Built

Across five years, Anthropic has constructed the following coherent narrative system:The company has a $380 billion valuation, run-rate revenue that surpassed $30 billion as of April 2026, and a market position built almost entirely on the narrative that safety and capability are not in tension — they are the same thing.

Whether or not you believe them, you have to respect the architecture.

Part Three: OpenAI — A Case Study in Narrative Collapse

To understand what Anthropic has built, you need to understand what OpenAI dismantled.

The Origin: The Same Starting Point

OpenAI was founded in December 2015 as a nonprofit with an explicit mission: develop artificial general intelligence for the benefit of all of humanity. Not for shareholders. Not for a company. For humanity.

The founding team included Sam Altman, Elon Musk, Greg Brockman, Ilya Sutskever, and others. The nonprofit structure was intentional — a deliberate choice to remove investor control from decisions about the development of potentially civilization-scale technology. The board of the nonprofit was, by design, accountable to the mission, not to capital.

In 2019, OpenAI shifted to a "capped-profit" structure — a for-profit subsidiary controlled by the nonprofit. The stated reason was the need to raise capital at a scale a pure nonprofit couldn't access. The cap on profits was meant to preserve the mission orientation while enabling commercial investment.

Microsoft invested $1 billion. Then more. Then billions more.

In 2022, OpenAI released ChatGPT. It reached 100 million users faster than any product in history. OpenAI stopped being a research lab. It became the center of the AI universe.

And the narrative began to unravel.

The Moment the Architecture Failed: November 2023

On November 17, 2023, the OpenAI board of directors fired Sam Altman. The official statement said the board had concluded he was "not consistently candid in his communications."

What followed was five days of public chaos. Employees threatened mass resignation. Microsoft, which had invested billions, found out about the firing on Twitter — as board member Helen Toner later confirmed. Altman orchestrated a return through investor and employee pressure. By November 22, he was back as CEO. Three of the board members who fired him were gone.

The structural narrative about OpenAI's identity — we are a nonprofit-controlled organization that puts mission above profit — was publicly stress-tested and failed. The board that was supposed to be mission's guardian was overpowered by capital in four days.

Toner's account of the events that led to the firing is its own narrative disaster. When OpenAI released ChatGPT in November 2022, the board was not informed in advance. They found out on Twitter. Altman had not told the board he owned the OpenAI startup fund. The legal department had purportedly approved a release without safety testing — a claim that, if false, was a direct lie to the board.

These are not abstract governance failures. These are narrative failures. The story OpenAI told about itself — we are the responsible actors; the mission drives everything — could not survive this evidence.

The Drift: From "Benefit of Humanity" to "All Lawful Use"

The narrative drift at OpenAI is visible in its policy evolution toward military use:

- 2023: OpenAI's usage policy explicitly banned military applications.

- January 2024: OpenAI quietly removed the blanket military prohibition from its policies.

- February 2026: OpenAI signed a classified Pentagon deal — triggered in part by the collapse of Anthropic's Pentagon relationship and the Trump administration's need for an AI partner willing to accept "any lawful use" terms.

The company that was founded to benefit all of humanity was, within a decade, signing classified military contracts after its competitor refused to do the same.

The contrast with Anthropic is not subtle.

Anthropic lost a $200 million contract and accepted a supply chain risk designation rather than cross two redlines. OpenAI took the contract.

Neither decision is simple. Arguments exist on both sides about the right policy approach to military AI. But as narrative, the comparison is devastating for OpenAI — because OpenAI had spent years claiming the moral high ground. Anthropic hadn't made that claim in the same way. Anthropic had built structural proof.

The For-Profit Conversion: When Structure Can't Hold the Story

In 2025, OpenAI announced plans to convert from its capped-profit structure to a public benefit corporation (PBC). The nonprofit board, which had been the structural embodiment of the mission-first claim, would remain technically in control — but with significantly reduced power.

A former OpenAI employee quoted in the EA Forum described the situation with precision: "The story of OpenAI's history is trying to balance the desires to raise capital and build the tech and stay true to its mission. The current move is an attempt to 'separate these things' into a purely commercial entity focused on profit and tech, alongside a separate entity doing 'altruistic philanthropic stuff.' That's wild on a number of levels because the entire philanthropic theory of change here was: we're going to put guardrails on profit motives so we can develop this tech safely."

This is not a hostile outside critic. This is someone who was inside the company.

Notably, PBC status — the structure OpenAI is converting to — is the same structure Anthropic operates under. OpenAI's conversion is, in part, a migration toward the model its safety-focused breakaway competitor already adopted from the beginning.

Sam Altman: Narrative as Performance

Sam Altman is one of the most effective communicators in Silicon Valley. He is a gifted narrative performer — in interviews, in essays, on stage. His ability to articulate vision, to make the abstract feel urgent, to make himself feel trustworthy, is genuine and considerable.

The narrative problem is that his actions have serially undercut the claims.

He was fired for lack of candor. He was reinstated by employee revolt, not mission alignment. He removed military use restrictions from the policy. He took the Pentagon contract. He converted the nonprofit. He built a company that describes its mission as benefiting all of humanity and is currently valued in excess of $300 billion with a controlling stake that makes him extraordinarily wealthy.

None of this makes him a villain. It makes him a founder running a commercial company — like virtually every other tech founder. The strategic narrative problem is that OpenAI spent years claiming to be something categorically different. When the distance between the claim and the behavior became visible, the narrative didn't crack. It shattered.

Contrast with Anthropic: Dario Amodei did not claim perfection. He claimed a calculated bet. He did not promise to always be mission-first over profit. He said: if this technology is coming, someone safety-focused needs to be at the frontier. He built structural proof. When he said no to the Pentagon, the refusal was legible — because the architecture already told you he would say no.

The lesson: Strategic narrative is not about being more virtuous. It is about making claims you are structurally capable of keeping, then keeping them publicly.

Part Four: Apple — The Pre-AI Case Study

Why Apple Belongs Here

Apple is not an AI company. It belongs in this analysis because it proves something essential: strategic narrative is not a new phenomenon, not a Silicon Valley invention, and not confined to technology companies navigating existential policy questions.

Apple built, sustained, adapted, and monetized a strategic narrative across five decades. Understanding how they did it — and what it cost them when they drifted from it — gives you the clearest possible map of how narrative architecture actually works.

January 22, 1984: The Opening Move

The "1984" Super Bowl commercial did not introduce the Macintosh. It introduced a world view.

Directed by Ridley Scott (fresh off Blade Runner), the commercial showed a gray, oppressive dystopia — rows of shaved-headed drones staring at a screen, listening to a voice of conformity and control — and a lone woman running through them with a hammer. She threw it. The screen shattered. The voice died.

The text: "On January 24th, Apple Computer will introduce Macintosh. And you'll see why 1984 won't be like 1984."

The commercial ran once. It has never stopped being talked about.

What made it strategic rather than simply spectacular? It claimed a world view, not a product feature.

The commercial did not say the Macintosh was faster or cheaper or better-looking than IBM. It said IBM was totalitarianism and Apple was freedom. It did not make a comparative argument. It made an identity argument. To buy a Mac was to be the woman with the hammer. To not buy a Mac was to be the drone in the audience.

As analysts at The Comm Spot noted: Apple cemented its image as a rebellious innovator, appealing to creative individuals rather than corporations. The ad sold 72,000 Macintosh units in the first 100 days — but more importantly, it established a narrative frame that would outlast every product Apple ever made.

The Orwellian-themed commercial positioned IBM as Big Brother and "embodied Macintosh and Apple as freedom-seeking individuals breaking away from this oppressive regime." That framing — Apple as liberation from conformity — became the through-line for everything that followed.

1997: Think Different — Narrative Resurrection

By 1996, Apple was failing. The company had lost its way after Jobs left in 1985. Market share had collapsed. The stock was at historic lows. Gil Amelio was CEO and running out of options.

When Jobs returned, an internal meeting was held to discuss brand strategy. The incumbent agency presented a campaign with the slogan "We're back." Jobs, famously, said "the slogan was stupid because Apple wasn't back yet."

He was right. Not just operationally. Narratively. "We're back" is a story about the past. It positions Apple as recovering. It is defensive. It is not a strategic narrative.

What replaced it was Think Different.

The campaign — developed by Lee Clow and Craig Tanimoto at Chiat\Day — showed black-and-white photographs of history's most consequential creative rebels: Einstein, Gandhi, Picasso, Amelia Earhart, Bob Dylan, Muhammad Ali, Martin Luther King Jr. The copy, written by Rob Siltanen:

"Here's to the crazy ones. The misfits. The rebels. The troublemakers. The round pegs in the square holes. The ones who see things differently... Because the people who are crazy enough to think they can change the world are the ones who do."

Not a single Apple product appears in the original ad. Not a price. Not a feature. Not a spec. Just a world view and an invitation to join it.

Think Different did not say "buy our computers." It said: if you see the world this way, you belong with us.

Jobs understood something that most brand leaders miss: the most durable customer loyalty is identity loyalty. When your product makes customers feel like themselves — better, truer versions of themselves — you stop competing on features and start competing on belonging. Features can be copied. Identity cannot.

The Business Model as Narrative Infrastructure

Here is where Apple's strategic narrative becomes architecture rather than advertising.

Apple's business model is built to prove the narrative claim. Understand what this means:

- Apple charges premium prices. The iPhone's average selling price has historically been $800+, compared to roughly $300 for Android competitors. This is not possible without a narrative that justifies the premium — and the narrative is that Apple products are not merely functional objects but identity artifacts.

- Apple controls the entire ecosystem — hardware, software, services — creating what analysts describe as a "seamless integration" where "leaving breaks everything." This is the ecosystem era's translation of the original narrative: you belong with us, and belonging has its own gravity.

- Apple competes on identity, not specifications. As Zamora Design noted: "Google competes on information access, Amazon on convenience, Microsoft on enterprise productivity. Apple competes on identity and ecosystem integration." This difference in competitive positioning is not a marketing choice. It is an architectural one.

The "Mac vs. PC" campaigns of the mid-2000s (the "I'm a Mac, I'm a PC" series) are the clearest expression of the business model as narrative. The Mac was cool, relaxed, creative. The PC was stiff, corporate, bureaucratic. The campaign did not argue technical superiority. It argued cultural superiority. And it worked because the products — at that moment — actually backed the claim. The Mac genuinely was more intuitively designed, more visually elegant, more oriented toward creative work.

The narrative and the product told the same story.

Three Eras, One Arc

Apple's narrative has gone through three distinct phases, each a response to where the company was in its growth:

Era 1 — Rebel Positioning (1984–1997)

Claim: We are fighting the conformity of IBM/Microsoft.

Mode: Adversarial. We vs. them.

Expression: "1984," early Mac marketing, the Jobs aesthetic.

Era 2 — Aspiration (2001–2015)

Claim: Our products make your life more beautiful and simple.

Mode: Aspirational. Life is better with this.

Expression: iPod ("1,000 songs in your pocket"), iPhone ("an iPod, a phone, and an internet communicator"), iPad ("magical and revolutionary").

Era 3 — Ecosystem Integration (2015–present)

Claim: Your world works best when it works together.

Mode: Belonging. The whole is greater than the parts.

Expression: AirPods, Watch, Continuity, iCloud, "Privacy is a human right."

What is remarkable is not that the narrative evolved. It is that the evolution was strategic — each era's narrative addressed the actual competitive and commercial reality of that moment, and each was built on the foundation of the previous one. The rebel became the aspirational brand became the essential infrastructure. The identity core — Apple is for people who see things differently — never changed.

The Lesson From Apple's Narrative Cracks

Apple has not been immune to narrative contradiction. Critics have noted with some justification that the company that positioned itself against corporate conformity and surveillance has:

- Become the most valuable corporation in history

- Used Chinese manufacturing labor under scrutiny

- Resisted government attempts to unlock iPhones for privacy reasons while simultaneously building CSAM-scanning tools

- Introduced "privacy is a human right" as a brand claim while running a $20+ billion advertising revenue partnership with Google

These contradictions exist. But they have not destroyed the narrative — because Apple built the narrative into the product architecture rather than just the messaging. The iPhone genuinely is more private than most Android alternatives. The ecosystem genuinely does work together seamlessly. The brand claim, even where imperfect, is not empty. It has enough structural truth to survive the contradictions.

Strategic narrative does not require perfection. It requires enough structural truth that the contradictions cannot fully dislodge the claim.

Part Five: The Architecture — How Strategic Narrative Is Actually Built

Having walked three cases — one current demonstration in progress (Anthropic), one in collapse (OpenAI), and one long arc of earned and defended narrative (Apple) — we can now name the structural principles.

Principle 1: The Claim Must Be Architecturally Embedded

A claim that lives only in marketing copy is a story. A claim that lives in your legal structure, your product design, your hiring criteria, and your policies is a narrative.

- Anthropic embedded its safety claim in Constitutional AI, in the Soul Document, in its PBC legal structure, in its usage restrictions, and ultimately in its willingness to lose a $200 million government contract.

- Apple embedded its "different" claim in the actual product design philosophy, in the retail experience, in the ecosystem architecture, in the pricing strategy.

- OpenAI stated the claim — for the benefit of all humanity — and built the legal and operational structures to contain it (the nonprofit board) but then allowed those structures to be bypassed when they conflicted with commercial pressure.

Principle 2: Proof Must Be Costly

The most powerful proof of a narrative is proof that cost something.

It is not difficult to claim values when values are free. The moment those values come with a price — a contract lost, a partnership declined, a partner refused, a feature not built — and the company pays the price anyway, the claim becomes credible in a way no campaign can manufacture.

- Anthropic paying with a $200 million contract and a supply chain risk designation is proof that could not be purchased.

- Apple refusing to unlock the San Bernardino shooter's iPhone in 2016 — in the face of FBI and DOJ pressure — was proof of its privacy narrative that no "privacy is a human right" ad could have generated.

- OpenAI quietly removing its military use restrictions after pressure is the inverse: the absence of costly proof.

Principle 3: The Narrative Must Explain the Business Model

Every business model is, at its core, a theory about what people will pay for and why. The most durable business models are those where the reason people pay is identical to the claim the narrative makes about the world.

- Apple says: you deserve beautiful, integrated tools that match who you are. People pay premium prices for that. The narrative explains the margin.

- Anthropic says: the most responsible AI is also the most capable AI. Enterprise customers pay for that because they need to trust the tools in their workflows. The narrative explains the contract.

- OpenAI says: we are building AI for all of humanity. And then charges $20/month for ChatGPT Plus and builds a $300 billion for-profit company. The narrative does not explain the business model. The tension is irresolvable — which is why the narrative keeps breaking.

Principle 4: Narrative Adapts Without Abandoning the Core

Apple in 2026 does not run the same campaigns it ran in 1984. The world is different, the company is different, the competitors are different. But the identity core — for people who see things differently — has never been abandoned. The expression evolves. The soul doesn't.

Anthropic's early narrative was about safety as constraint — what we won't do. The Pentagon standoff and Mythos suggest the narrative is now evolving toward safety as proof of capability — we build the most powerful things and choose what to do with them. That is a maturation of the same identity, not a contradiction of it.

Principle 5: Narrative Is Not Spin. It Is Selection.

The most important misunderstanding about strategic narrative is that it is about shaping perception through exaggeration or omission.

It is not.

Strategic narrative is about selection — choosing which true things to make central and which true things to contextualize. Every company has contradictions. Every company has inconvenient facts. The narrative does not hide these. It either contextualizes them (the Soul Document's acknowledgment of commercial pressure) or it reframes them as proof (the Mythos withholding as evidence of restraint rather than capability limitation).

The difference between narrative and spin is that narrative must survive contact with the truth. Spin cannot. Spin is a tactic. Narrative is a system.

Part Six: The Decision Framework — Building Your Own Strategic Narrative

For any leader building a strategic narrative, these are the questions that matter:

Question 1

What is your claim about how the world works and what is broken in it?

This is not your value proposition. This is your world view. Anthropic's is: powerful AI is coming; the question is who builds it and how. Apple's is: most tools are built for corporations; great ones are built for people. OpenAI's was: AI should belong to humanity; it no longer has one that it can keep.

Question 2

What structural evidence supports your claim that is not just your word?

List everything that would make a skeptic say: I cannot dispute this because it is built into how they operate. If your list is empty, you have a story, not a narrative.

Question 3

What have you paid — or are you willing to pay — to maintain it?

If there is no cost you have absorbed or are willing to absorb to keep the claim true, it will break under pressure. Name the price in advance.

Question 4

Who is your narrative in service of — specifically?

"Everyone" is not an answer. Apple serves the creative rebel. Anthropic serves the enterprise that needs to trust AI in high-stakes decisions. The more specific the service, the more durable the narrative.

Question 5

How does your business model prove your narrative claim, not contradict it?

Where you make money should be where your narrative says you create value. The closer that alignment, the more self-reinforcing the system.

Epilogue: Narrative and Power

There is a reason this analysis begins with Anthropic and ends with a framework. Anthropic is, right now, one of the most instructive live case studies in strategic narrative that any communicator can study — because the narrative is being tested in real time, at stakes that are genuinely civilizational.

The company is operating at the intersection of technology, government, ethics, and commercial survival and choosing, repeatedly, to make the costly narrative move rather than the comfortable one. Whether every choice is exactly right is not the point. The architecture is right. The clarity is right. The willingness to pay the price is right.

That is what strategic narrative at its highest level looks like.

It is not about being the best storyteller in the room. It is about building a system so coherent — where what you say, what you do, what you charge, and what you refuse to do all tell the same story — that the narrative becomes self-evident.

When you are at that level, you are not telling people what to think about you.

You are building structures that make the thinking inevitable.

Key Sources Referenced

- Euronews / NBC News / TechCrunch / InfoQ — Claude Mythos Preview coverage, April 2026

- Oxford Programme for Cyber and Technology Policy — Pentagon-Anthropic dispute analysis

- EFF — Anthropic-DOD Conflict analysis

- CNBC — Pentagon standoff reporting, Feb–Apr 2026

- TIME — Claude's Constitution reporting, January 2026

- Anthropic Soul Document / Claude's Constitution — published January 2026

- Alex Kantrowitz / Medium — The Making of Anthropic CEO Dario Amodei

- EA Forum — OpenAI nonprofit-to-for-profit conversion

- Inc. / Lex Fridman Interview — Dario Amodei on leaving OpenAI

- Zamora Design — Apple strategic narrative analysis

- The Comm Spot — Apple 1984 case study

- Wikipedia — Think Different campaign history