Hiya big thinker,

Does your company have a moral code? A soul?

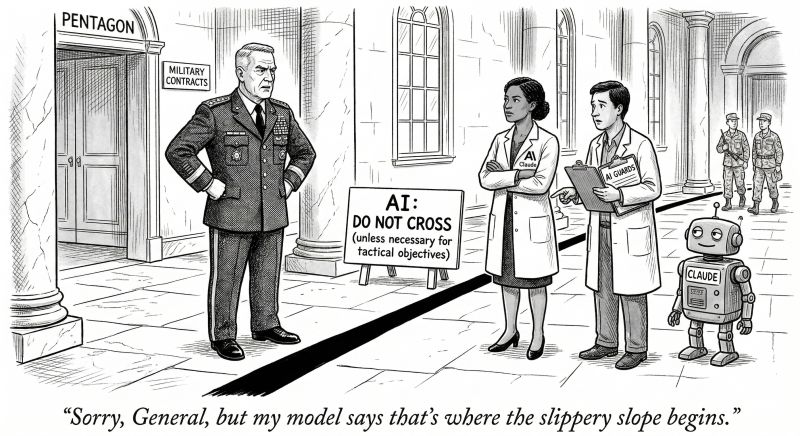

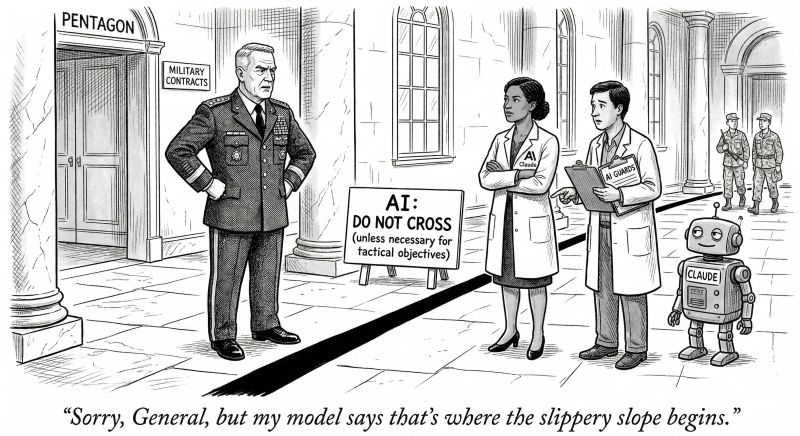

If you scrolled past the Anthropic vs Pentagon headline a week or so ago, you may have missed a key inflection point in our history.

I almost did. But sometimes timing is everything and I happened to be messing around with an AI project at the time that made me do a double-take. (More on that later.)

Here’s what happened: An AI company (Anthropic) effectively told the U.S. military:

Sorry. There are things we will not do. Lines we won’t cross.

That decision triggered a standoff between the Pentagon and Anthropic over the use of its Claude model inside certain defense environments.

The story is being covered largely as a policy dispute about guardrails, procurement, and national security requirements. But beneath the headlines sits a much broader leadership question.

What happens when your technology can do something you believe it shouldn’t?

That is the moment leaders need to pay attention to.

Not the policy fight on the surface. The decision underneath it.

The line a company draws when its capability outruns its comfort zone.

The mistake: treating values as brand language

For years, most organizations have treated conversations about purpose and values as communications exercises. Language for brand decks. Themes for keynotes. Stuff that lives comfortably in the communications layer of the company.

AI is removing that comfort.

When a technology has the capacity to influence elections, shape markets, guide weapons systems, or assist in the capture of a foreign president, the real issue is no longer brand positioning.

It becomes something deeper.

The moral architecture of the organization itself.

Every company operates from an internal narrative — a set of assumptions about power, responsibility, progress, and consequence. Most of the time those assumptions are invisible. Strategy and execution simply flow from them without much scrutiny until the consequences bring ethics so clearly into question.

And when they do, the underlying philosophy of the company suddenly becomes visible to the world.

The shift: examining your moral architecture

This is what I’ve been running up against in my work. The velocity that AI is bringing to business means we need to start treating values as the boundaries that shape what the company will and will not do.

The moment between Anthropic and the Pentagon is a glimpse of this dynamic in real time. A decision point where capability, incentives, and conscience all collide.

AI is going to create many more of these situations.

Which is why this moment may be quietly forcing a new discipline for executive teams.

Most organizations are well trained to evaluate capability and market opportunity. Far fewer are disciplined in examining consequence with the same rigor.

They have to examine the beliefs governing what the company is willing to enable, reward, justify, and refuse before those beliefs are tested in public.

If moral architecture is not being defined intentionally, your technology will define it for you.

The new mandate: a company SOUL doc

Most executive conversations around a powerful new initiative begin in the same place:

→ Can we build it? (The engineering question)

→ Can we scale it? (The strategy question)

That makes sense.

Those are the questions companies have trained themselves to answer.

The harder question is the one that comes next:

What happens when we do? (The moral question)

This is where moral architecture shows up.

Every organization runs on a three-part operating system, whether you acknowledge it or not:

- Capability: The raw power of what your company can build, acquire, or deploy.

- Incentives: The rewards the market offers for doing it faster, cheaper, or at a larger scale.

- Moral Architecture: The set of internal boundaries and beliefs that determine what your organization will permit, reward, justify, or refuse when capability and incentives are pushing for more.

Most leaders manage the first two instinctively. The third requires a deliberate practice.

Trust me, I know this intimately. My entire career has been about influence: helping companies communicate things in a way that is intuitively believable so they can grow. There’s the capability and the incentive.

But lately, when I work with companies to teach their execs how to do this themselves, I’ve been increasingly calling out that these ‘powers’ have a shadow side. Persuasion itself doesn’t have a moral compass. It can be pointed in any direction.

So how do you bake the moral architecture into your org?

Maybe we need to look to history.

Way before modern corporations existed, an alliance of six Indigenous nations often credited with inspiring the US Constitution, practiced what is often called the Seven Generations Principle — the expectation that decisions should consider their effects seven generations into the future.

Not the next quarter.

Not the next product cycle.

Seven generations.

That discipline forced leaders to consider consequences that were completely invisible in the short term.

Powerful technologies require that kind of thinking again. Because once the capability exists and the incentives accelerate adoption, the only thing left defining the line is the moral architecture of the organization itself.

Google had the “Don’t Be Evil” motto for 18 years, then abandoned it quietly. Anthropic is doing the opposite — publishing their moral code publicly and recently.

Anthropic’s published constitution—what they previously called a 'soul document'—is part of their approach to training Claude. It's designed to give Claude the reasoning behind ethical principles, not just rules to follow. The constitution includes an instruction that Claude should act as a conscientious objector and refuse requests from Anthropic itself if they conflict with broader ethical principles.

What would your company’s constitution say? And for how long?

Start here

The next time a high-consequence decision reaches the executive table, ask these four questions before anything moves forward:

- What assumptions are driving this decision? Every strategy carries buried beliefs about what the market will reward, what users will tolerate, and what competitors will force your hand on. Name them.

- What are we treating as acceptable risk? Not financial risk. Not timeline risk. The risk that what you build will be used in ways you didn't intend, and that your name will still be on it when it is.

- What are we rewarding through this choice? Speed, scale, and market share are easy to measure. The behaviors and tradeoffs you're incentivizing along the way are harder to see, until they become your culture.

- What consequences are we prepared to own once this leaves our hands and enters the world? Because it will leave your hands. And the world will not ask what you intended. It will judge what you enabled.

Underneath all four is the thing most leadership teams never say out loud:

What story will these decisions tell about who we believed ourselves to be?

Why it matters now

AI is accelerating a moment where those questions can’t remain abstract anymore. Which means the next evolution of leadership thinking won’t come only from engineers and strategists.

Now is the time to assemble a board of trained thinkers.

Leaders should encourage deeper collaboration with philosophers, historians, ethicists, and storytellers—people trained to examine how belief systems shape human action.

Because make no mistake, every company is already operating from a narrative.

The only real question is whether that narrative has been examined intentionally, or whether it only becomes visible when the stakes suddenly become enormous.

One more thing

I’m curious whether you are actively wrestling with these questions right now in your org.

If you are, get in touch, I’d genuinely love to hear how that conversation is unfolding. I’m always interested in how different organizations think through the lines they’re willing—or unwilling—to cross.

FAQs

Q: What is moral architecture in a company?

A: Moral architecture is the set of internal beliefs and boundaries that shape what a company will permit, reward, justify, or refuse. It shows up when capability and market pressure are pushing for more, but leadership has to decide where the line is. In other words, it is the difference between what a company can do and what it believes it should do.

Q: Why does AI force companies to think about ethics differently?

A: AI increases the speed, scale, and impact of business decisions. That makes it much harder for values to stay vague or live only in brand language. Once a technology can shape public behavior, influence systems, or be used in high-consequence situations, leadership has to define clear boundaries before the market does it for them.

Q: What is a company SOUL doc?

A: A SOUL doc is a clear articulation of the beliefs and boundaries that guide what a company will and will not do. It goes beyond mission statements and brand values because it is meant to hold up when real tradeoffs show up. The point is to make moral judgment visible before a public crisis forces it into the open.